BiAS AND VARIANCE TRADE OFF Basic question in machine learning is // y = f(x) + random error given data xi, yi estimate a model fN(x) // Minimizing the expectation of difference between prediction and true observation, which is found to be Bias**2 + Variance of the model itself + irreducible error(chaotic nature that cannot be removed) Do a mind experiment: a simple model such as linear regression, given N points, try a linear regression. On one hand, linear model will be dependent on the training dataset you have in hands. So there is a variability in your linear model, this is called variance of the model itself. On the other hand, the distance between the regression line and training dataset, can be described as a L2 error. A nature instinct is the deviation of data away from the straight line. In total, they will be bias error. According to Wiki, can be thought as the error caused by the simplifying assumptions built into the method. E.g., when approximating a non-linear function f(x) using a learning method for linear models, there will be error in the estimates f(x) due to this assumption. How to measure bias error and variance error?

Be careful! Even if the principle behind the wheel is a relation that independent of the training data, even if it was extremely simple, the method you choose for determining the true model (ML), actually determines what kind of variance you should expect. COMMENTS ON R2

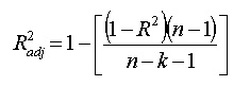

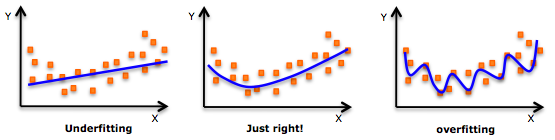

do we need to get more data? If you have 200 points, let say, it is good to try a cross validation with varying training sample size(also accomplished by sklearn learning curve), if predicted results has reached a extrema, then let's call it stop, because data is not that needed any more. so far the mdoel is good enough for current data. Adjusted R squared and r squared  People realize that R squared in linear regression analysis can be misleading: -> adding more and more factors will ALWAYS increase the R squared, no matter it is useful or not. So how to choose a R squared that will guide us to add useful variable? ---> choose adjusted R squared. n is total number of samples, k is the number of factors in your regression model The mechanism is that adjusted R squared will only increase when you add the right variable, that causes R^2 to increase, then it overweights the increase of k, resulting a increased adjusted R squared. For more information, visit: http://www.statisticshowto.com/adjusted-r2/ uNDerfitting and overfitting

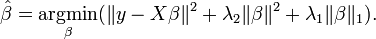

Ridge regression + lasso regression  bai Both ridge and lasso are regularization of linear regression to reduce variance of the model. Ridge Ridge regression considers a penalty on L2 norm of coefficients in order to achieve reduced variance in models, based on the belief that smaller coefficient correlates closely to variance of model itself. Lasso Lasso model considers a L1 norm of penalty imposing on coefficient which based on the same argument for ridge. However, if there is a group of highly correlated variables, then the LASSO tends to select one variable from a group and ignore the others. The alpha is selected usually automatically to minimize the cross-validation error. Difference between Lasso and Ridge Lasso is able to achieve both of these goals by forcing the sum of the absolute value of the regression coefficients to be less than a fixed value, which forces certain coefficients to be set to zero, effectively choosing a simpler model that does not include those coefficients. This idea is similar to ridge regression, in which the sum of the squares of the coefficients is forced to be less than a fixed value, though in the case of Ridge regression, this only shrinks the size of the coefficients, it does not set any of them to zero. So in general, Lasso is an aggressive method, can select features, while Ridge is more conservative than Lasso, can only suppress overfitting. So if you are confident about the independence of your feature, use Ridge is safer than Lasso. Used as feature selection However! ONLY Lasso can be used as a tool of feature selection! If you try different alpha and plot the coef_ from fitted linear regressor, you will see as alpha increases, how does the coefficient vary and see if some feature can be eliminated at the first place. Elastic Net For a group of highly correlated data, lasso tends to eliminate all but one variable randomly. Elastic Net is the method of Ridge + Lasso, which is shown on the right. So Elastic Net can do feature selection, coefficient shrinkages. And the quadratic penalty term makes the loss function strictly convex, and it therefore has a unique minimum. So no random behaviour as Lasso. Stochastic Gradient Descent - SGD Regressor The class SGDRegressor implements a plain stochastic gradient descent learning routine which supports different loss functions and penalties to fit linear regression models. SGDRegressor is well suited for regression problems with a large number of training samples (> 10.000), for other problems we recommend Ridge, Lasso, or ElasticNet. So basically, it is still a linear model regressor but with fast speed. However, some tips has to be mentioned. Tip 1:

from sklearn.preprocessing import StandardScaler scaler = StandardScaler() scaler.fit(X_train) # Don't cheat - fit only on training data X_train = scaler.transform(X_train) X_test = scaler.transform(X_test) # apply same transformation to test data // For more information, please refer to http://scikit-learn.org/stable/modules/sgd.html PreprocessingTo generate features from raw data, in order to perform certain regression, preprocessing is used to generating those features. For example, for poly features, use the following command. pp_pf = preprocessing.PolynomialFeatures(degree=3) Also, if the input is more than one column, the result will contain interaction features. See the following in help documents. Generate polynomial and interaction features. Generate a new feature matrix consisting of all polynomial combinations of the features with degree less than or equal to the specified degree. For example, if an input sample is two dimensional and of the form [a, b], the degree-2 polynomial features are [1, a, b, a^2, ab, b^2]. classification LOGISTIC REGRESSION It sounds like it is a regression method but it is not. Actually regression is not that far away from classification. In some extent, they are almost the same. However, threshold is a interesting thing in classification, which also reflects how human classify things. This classification method, logistic regression, is actually mapping the whole Y results, into [0,1] using a exp function. Then linear fitting for a log function appear in the derivation process. Finally threshold considered as 0.5. So a classification result is coming into being. Some comments from Wiki I think is helpful:

Decision treeDecision tree learning uses a decision tree as a predictive model which maps observations about an item to conclusions about the item's target value. It is one of the predictive modelling approaches used in statistics, data mining and machine learning. Tree models where the target variable can take a finite set of values are called classification trees. In these tree structures, leaves represent class labels and branches represent conjunctions of features that lead to those class labels. Decision trees where the target variable can take continuous values (typically real numbers) are called regression trees. SVMMore formally, a support vector machine constructs a hyperplane or set of hyperplanes in a high- or infinite-dimensional space, which can be used for classification, regression, or other tasks. Intuitively, a good separation is achieved by the hyperplane that has the largest distance to the nearest training-data point of any class (so-called functional margin), since in general the larger the margin the lower the generalization error of the classifier.

|

AuthorShaowu Pan Archives

December 2017

Categories

All

|

RSS Feed

RSS Feed